Aims

Can we easily put control of personalisation algorithms in the hands of our editors and journalists?

Project

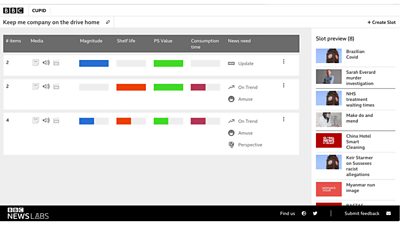

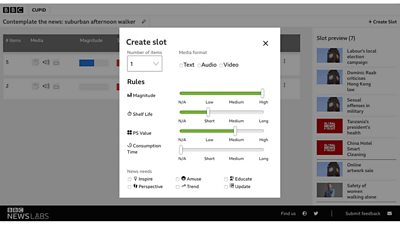

A lot of online platforms and websites are using machine learning recommendations to create personalised experiences. This project takes a completely different approach by testing if we can create a better audience experience by putting editors in control of personalisation?

We're building a prototype that allows journalists and editors to define curations and offers a preview of the different experiences they are creating. This concept takes inspiration from a tool that is already in production at Swedish Radio.

The prototype relies on well described clips of audio content to make up the experiences. We are using our Slicer prototype to chop up linear output into coherent standalone clips of audio.

How to codify the editorial decisions of curation

Defining the parameters that are used daily by editors and journalists across the BBC when they make judgment calls about what stories to commission and put into different parts of programs for audiences across the UK is an on-going conversation. To get the ball rolling we are using:

- Magnitude: the impact of the story

- Shelf life: the length of time the story will remain relevant

- Public service value: unique BBC qualities of the story to serve audiences

- Consumption time: duration of the story

- News needs: a set of six values that describe the purpose of a story for the audience (inspire me, amuse me, educate me, give me perspective, keep me on trend, update me)

Clips are annotated with values for each of the above parameters. In the prototype tool itself editors set a score for these same parameters that corresponds to the type of content they want to see returned in a slot.

Our first iteration of this project is using a small subset of audio clips from a week's output at the BBC.

Next steps

We are looking at continuing this experiment by extending it to text articles and trialling the output with pilot users in the newsroom and potentially audiences.

Tweet us @BBC_News_Labs if you want to find out more.

Results

Initial feedback from our first demo to over 100 people at the BBC was extremely positive. There is now a lot of interest and excitement from potential users for this prototype.

Team

Similar projects

View all "new audience experiences" projectsBBC News Labs

-

News

Insights into our latest projects and ways of working -

Projects

We explore how new tools and formats affect how news is found and reported -

About

About BBC News Labs and how you can get involved -

Follow us on X

Formerly known as Twitter