Aims

Can we make image search more efficient to improve the quality of images used on all types of news stories while also helping journalists under time pressure?

Outline

Compelling imagery is an essential part of online journalism. Its importance continues to grow at the BBC with a more visual news app in the works. For journalists under pressure to rapidly publish stories, finding great pictures quickly is tough.

The Oriel project aims to make the process of selecting images more efficient by:

- Implementing the latest machine learning technology to make relevant images more discoverable

- Facilitating collaboration on collections of images to allow experts to share the best images in their domain across the newsroom

- Automating searches for the latest pictures on certain stories or topics to save journalists' time

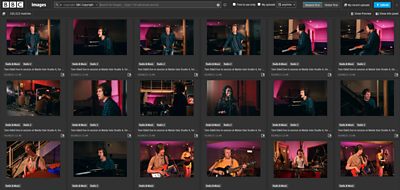

The prototype in development in News Labs is inspired by an ongoing project in BBC News to build a new image search tool for journalists. We've started to experiment with ways to make the search results more relevant in the tool by getting extra data about each image we have available.

Better results through better data

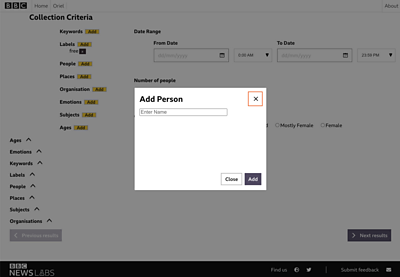

In order to add this extra metadata, we're using technology available from Amazon Web Services called Rekognition. By analysing the content of each image using these machine learning models, we can create extra characteristics in a search index.

We've then made these fields available for journalists to query in a prototype user interface. This means they can create more refined searches to get the sort of images they need for a particular story quickly.

We spoke to many of our colleagues in the newsroom to understand how they would like to filter results and so far some of the characteristics we've experimented with are:

- Emotion: Specify the sentiment of the image based on the analysis of facial expressions, e.g. happy, surprised

- Gender balance: Filter based on the predominance of genders photographed

- Number of people: Allowing for easy differentiation between group shots and close ups

Collaborative curation

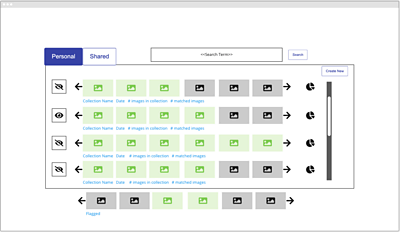

Better search results will help our journalists but we've also started experimenting with a way to make it easier for them to help each other. A second piece of functionality in the prototype will allow journalists to save searches, curate the resulting set of images further themselves, and share the criteria used and the resulting collection of pictures across the newsroom.

We hope this could lead to the best editorially approved images - on topics that are often difficult to find good visuals for - being discovered by journalists that are not specialists in these areas. On top of this, an experimental feature would automatically update these collections with the most recent images matching the saved criteria, and update journalists subscribed to these searches.

An example use case would be a breaking story, for example developments at COP26, on which a team are updating a live page throughout the day.

Next steps

We are tweaking the metadata fields to best meet the needs of journalists. We've started experimenting by taking snapshots of the entire image library for certain days to get feedback on the enhanced search results compared to the existing tools.

Tweet us @BBC_News_Labs if you want to find out more.

Results

- The first iteration of the prototype is giving very positive results compared to existing systems. The metadata enrichment pipeline shows great potential to enable a more efficient process for journalists.

Team

Similar projects

View all "new audience experiences" projectsBBC News Labs

-

News

Insights into our latest projects and ways of working -

Projects

We explore how new tools and formats affect how news is found and reported -

About

About BBC News Labs and how you can get involved -

Follow us on X

Formerly known as Twitter